Inference mode complains about inplace at torch.mean call, but I don't use inplace · Issue #70177 · pytorch/pytorch · GitHub

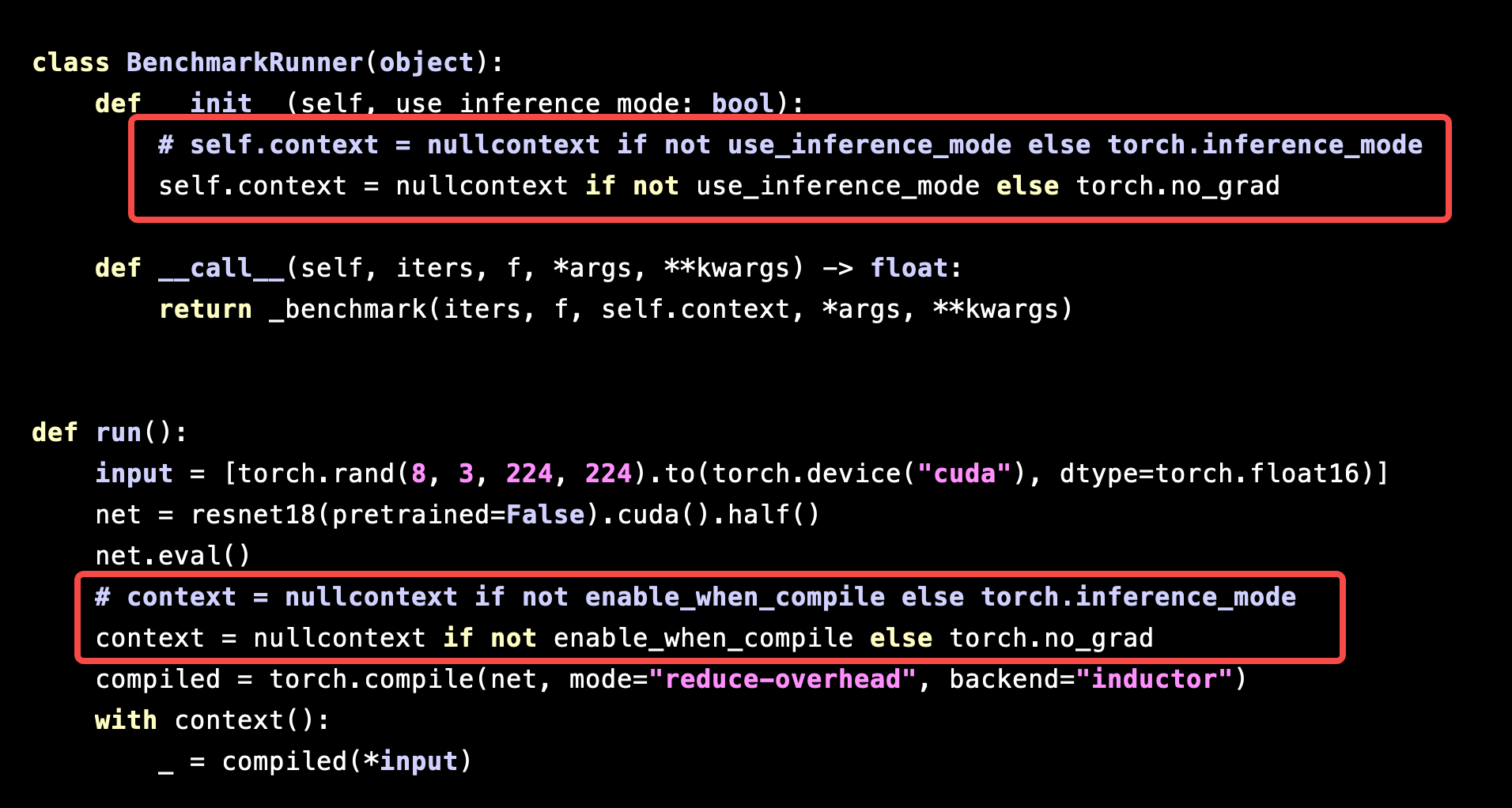

Performance of `torch.compile` is significantly slowed down under `torch.inference_mode` - torch.compile - PyTorch Forums

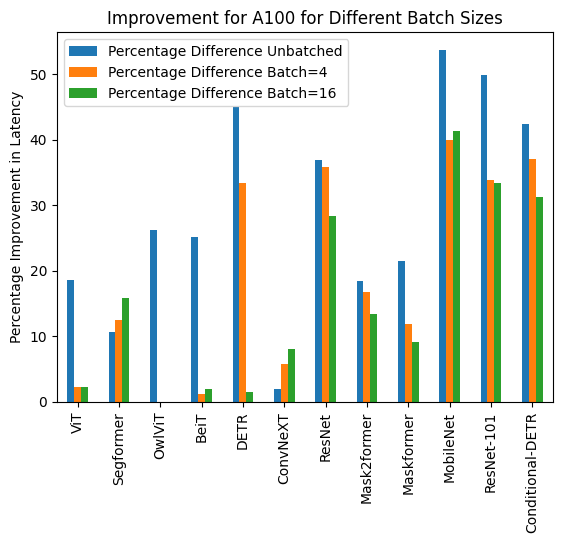

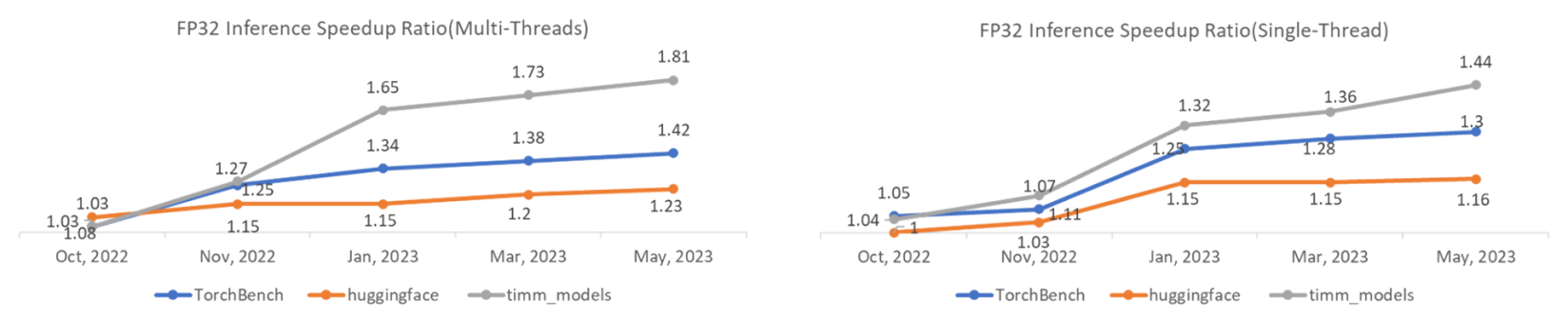

TorchDynamo Update: 1.48x geomean speedup on TorchBench CPU Inference - compiler - PyTorch Dev Discussions

Inference mode throws RuntimeError for `torch.repeat_interleave()` for big tensors · Issue #75595 · pytorch/pytorch · GitHub

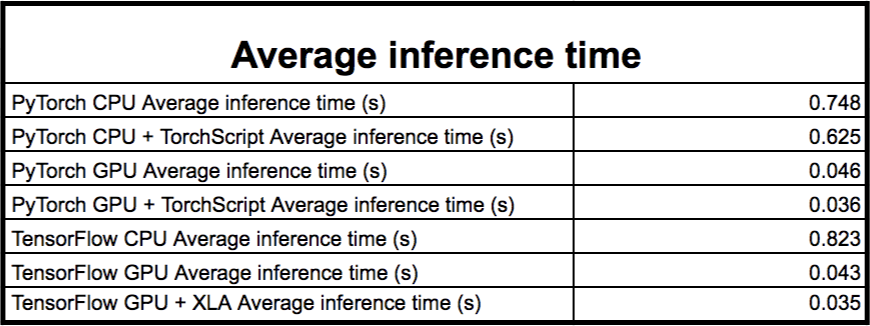

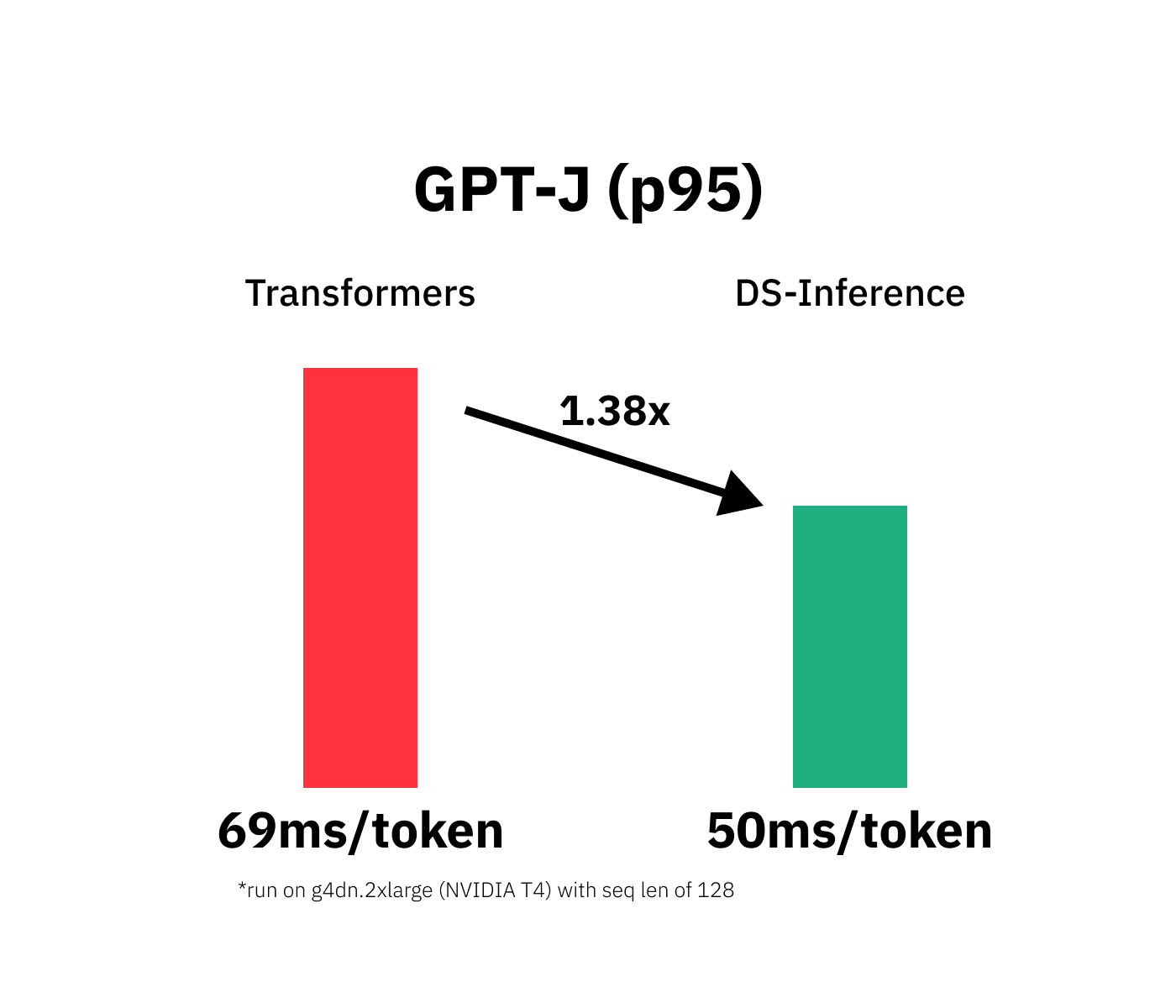

TorchServe: Increasing inference speed while improving efficiency - deployment - PyTorch Dev Discussions